Owning the Execution Layer: The Frontier in AI-Driven Development

Key Takeaways

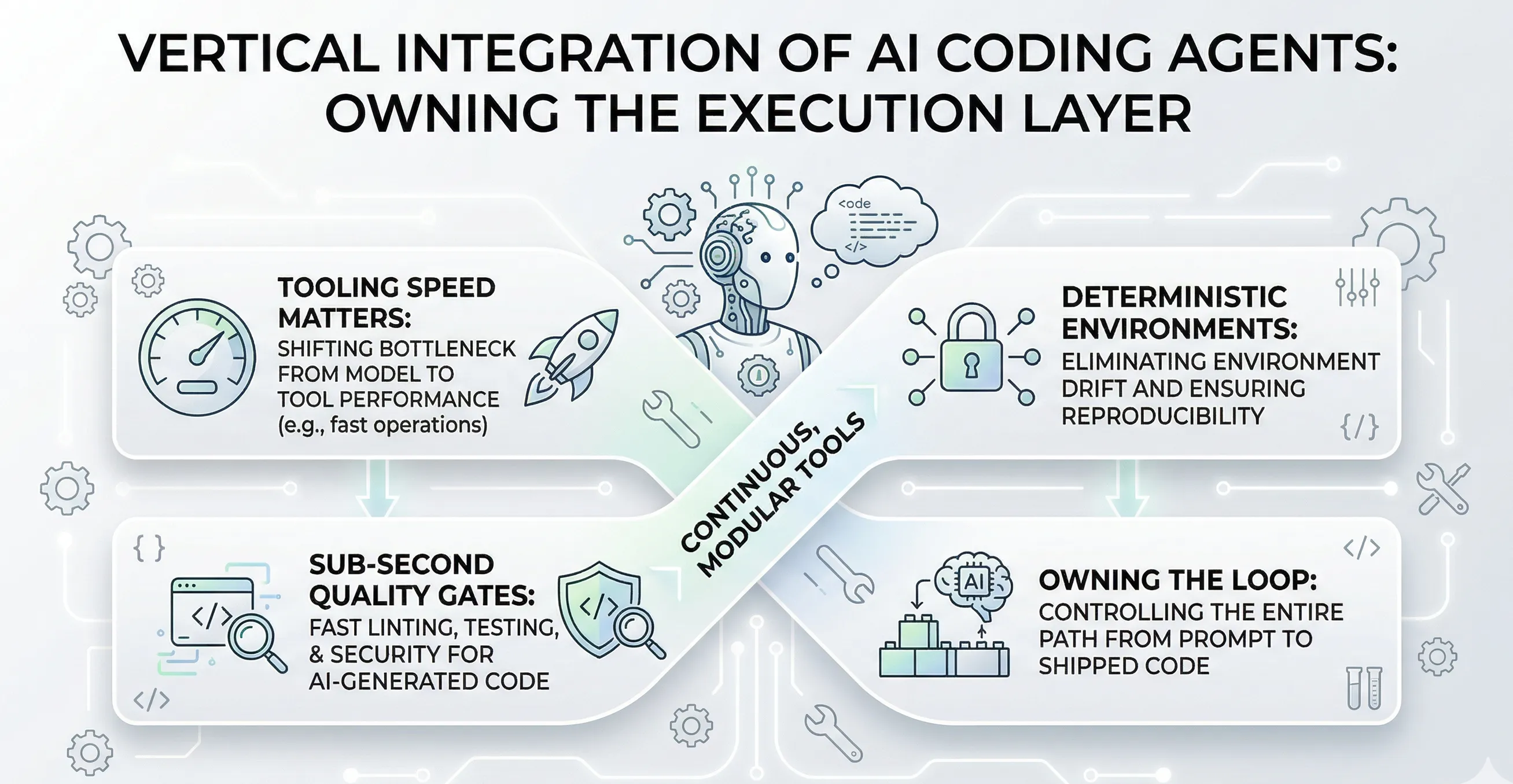

- OpenAI (Astral, Promptfoo, Rockset) and Anthropic (Bun, Vercept) are acquiring the execution layer underneath their coding agents — not developer tools for developers, but infrastructure for agents.

- The bottleneck shifted from model speed to tooling speed: uv is 10-100x faster than pip, ruff lints CPython in under 300ms, Bun’s test runner is 11x faster than Jest.

- Anthropic’s own engineering team demonstrated this bottleneck in practice: a multi-agent harness looping through plan-build-evaluate cycles for hours, where wall-clock time is dominated by execution — not model inference.

- Reliability matters more than speed: deterministic dependency resolution (

uv --frozen,bun ci) eliminates the environment drift that makes agents fail in CI. - AI-generated code has measurably higher defect rates (Snyk: 36-40% contain security vulnerabilities), making sub-second quality gates a requirement for autonomous code generation.

- The IDE becomes a host platform for multiple agents, each with its own vertically integrated toolchain. The engineering skill that matters most: constructing intent, building self-improving agent environments, and managing agents at scale.

OpenAI acquired Astral in March 2026. Three months earlier, Anthropic acquired Bun. Astral builds uv and ruff — Rust-based replacements for pip and flake8 that are orders of magnitude faster. Bun is the JavaScript runtime and package manager that Claude Code already ships as a compiled binary.

These aren’t developer relations plays. Both companies are buying the execution layer underneath their coding agents — the runtimes, linters, package managers, and test runners that determine how fast an agent can actually ship code.

What Actually Happened

OpenAI acquired Astral, the company behind uv (126 million monthly downloads at acquisition) and ruff. OpenAI also acquired Promptfoo (350,000+ developers, automated red-teaming for AI systems) and Rockset (real-time codebase indexing).

Anthropic acquired Bun shortly after Claude Code reached a $1 billion annual run-rate. Anthropic also acquired Vercept for autonomous UI interaction.

Two AI companies. Two language ecosystems. The same strategic move: own every layer between the model and running, tested code.

The Model Isn’t the Bottleneck Anymore

For years, AI labs competed on model quality — benchmarks, inference speed, context windows. That competition continues, but both companies hit a different wall: the tooling that executes between model output and working code.

The math is straightforward. When Codex generates code in 2 seconds but pip takes 20 seconds to install dependencies, the tooling is the bottleneck. When Claude Code runs continuous edit-run-test loops, the speed of each loop iteration — not just the model — determines throughput.

uv resolves and installs Python dependencies 10-100x faster than pip. Ruff lints the entire CPython codebase in under 300 milliseconds. Bun’s test runner executes large test suites 11x faster than Jest.

At the scale these companies operate — Claude Code alone hit a $1 billion annual run-rate — per-operation savings compound into material cost reductions. Every second shaved off dependency resolution, linting, or test execution across millions of weekly agent sessions directly reduces compute spend.

The Harness Pattern: Where the Bottleneck Lives

Anthropic’s engineering team published a post today showing what this bottleneck looks like in practice.

They built a multi-agent harness — a GAN-inspired architecture where three specialized agents loop through plan-build-evaluate cycles to autonomously develop full applications. A planner expands a short prompt into a multi-sprint specification. A generator implements features iteratively using a React, Vite, FastAPI, and PostgreSQL stack. An evaluator navigates the live, running application through Playwright — clicking through UI elements, testing API endpoints, and checking database state the way a user would.

Each evaluation cycle involves real execution: Vite rebuilds, Playwright navigations, server starts, database queries, screenshot captures. The evaluator runs 5 to 15 iterations per generation across multiple sprints. A single harness run stretches to four to six hours and costs $124–200.

The wall-clock time isn’t dominated by model inference. It’s dominated by execution. As the post notes:

“Because the evaluator was actively navigating the page rather than scoring a static screenshot, each cycle took real wall-clock time. Full runs stretched up to four hours.”

That’s the bottleneck these acquisitions are designed to eliminate. When every build-test-evaluate cycle hits the execution layer — the runtimes, the package managers, the test runners — the speed of that layer is a first-order cost driver. It’s the same logic that led Anthropic to acquire Bun: in a system where agents are repeatedly rebuilding and interacting with applications, the speed of each cycle compounds into hours and hundreds of dollars.

The Anthropic team’s closing observation reinforces the point: “The space of interesting harness combinations doesn’t shrink as models improve. Instead, it moves.” The harness pattern isn’t going away. The execution layer underneath it is only becoming more critical.

Reliability Matters More Than Speed

Speed is half the story. The other half is what makes these acquisitions strategic rather than just cost optimizations.

Coding agents fail when environments aren’t reproducible. The failure modes are disproportionately tooling problems:

- Non-deterministic dependency resolution. pip doesn’t guarantee identical dependency trees across runs. An agent that tests against one set of versions locally and gets different versions in CI produces flaky, unreliable results.

- Slow feedback loops. When test execution takes minutes, agents can’t iterate fast enough to converge on correct code. They burn tokens and compute waiting.

- Environment drift. Code that passes locally but breaks in CI because of environment inconsistency is wasted work — and agents can’t diagnose environmental differences the way humans do.

These aren’t model problems. No amount of training data fixes non-deterministic package resolution. No model improvement eliminates environment drift. These are infrastructure problems, and both companies are solving them by owning the infrastructure.

AI-generated code makes the reliability problem more urgent. Snyk found that 36-40% of AI-generated code contains security vulnerabilities. CodeRabbit’s analysis found formatting issues appear at 2.66x the rate of human-written code. When agents produce code with higher defect rates than humans, fast and deterministic quality gates aren’t optional — they’re the mechanism that makes autonomous code generation viable.

OpenAI: Owning Every Layer in Python

OpenAI is assembling a vertically integrated Python development stack. Each acquisition fills a specific gap in the agent execution pipeline.

Astral (uv, ruff, ty) — deterministic package management, sub-second linting, type checking.

uv produces a universal lockfile with deterministic dependency resolution. Its --locked flag errors if the lockfile is stale. --frozen skips resolution entirely and installs exactly what’s pinned. Every agent-triggered install produces identical environments — locally and in CI. No drift, no surprises.

Ruff lints the entire CPython codebase in under 300 milliseconds. For an agent that generates code with measurably higher defect rates, a sub-second linter that catches security vulnerabilities, formatting issues, and common bugs before execution is a reliability gate — not just a speed improvement. ty adds type checking to the suite, closing the last major static analysis gap.

Promptfoo — automated security scanning for agentic workflows.

Probes for 50+ vulnerability types including prompt injection, data exfiltration, and jailbreaks. Ships a dedicated GitHub Action for running security scans in CI. OpenAI stated it will “evaluate agentic workflows for security concerns” before production deployment. Promptfoo makes that evaluation a native, automated CI step.

Rockset — real-time codebase indexing.

Gives agents searchable memory over million-line repositories. Instead of regenerating patterns from scratch or hallucinating implementations, agents can retrieve relevant existing code.

The assembled pipeline: generated code is linted (ruff), type-checked (ty), dependency-resolved (uv), security-scanned (Promptfoo), and informed by existing patterns (Rockset) — each step sub-second and deterministic.

Anthropic: One Binary to Rule JavaScript

Anthropic is building the equivalent for JavaScript and TypeScript, with a fundamentally different architectural bet.

Bun is a single binary that handles runtime execution, dependency management, bundling, and testing. Where OpenAI acquired multiple specialized tools, Anthropic acquired one tool that consolidates several layers.

Claude Code ships as a Bun-compiled binary. This isn’t a partnership or integration — it’s the production runtime.

Bun’s test runner executes large suites 11x faster than Jest. For an agent running continuous edit-run-test loops, that’s the difference between a 30-second loop and a 3-second one. bun ci enforces frozen lockfile installs that fail the build if dependencies drift — the same determinism guarantee as uv’s --frozen flag, different ecosystem.

Every tool boundary an agent crosses is a potential failure point. Shelling out from runtime to package manager to test runner introduces environment variables, path resolution issues, and version mismatches. Bun eliminates several of those boundaries by consolidating them into a single process.

Vercept extends Claude’s capabilities to autonomous UI interaction — navigating and testing complex web applications. It closes the gap between “code that compiles” and “code that works in the browser.”

The tradeoff between approaches: Bun owns one ecosystem end-to-end with fewer integration seams. Astral gives OpenAI deeper, more granular control over each individual layer in Python.

Two Stacks, One Playbook

| Layer | OpenAI (Python) | Anthropic (JS/TS) |

|---|---|---|

| Package management | uv (deterministic lockfile) | Bun (built-in, frozen lockfile) |

| Linting / formatting | ruff (<300ms on CPython) | — |

| Type checking | ty (Astral) | TypeScript (native) |

| Test execution | pytest via uv | Bun test runner (11x vs Jest) |

| Security scanning | Promptfoo (50+ vuln types) | — |

| Codebase indexing | Rockset (real-time) | — |

| UI interaction | — | Vercept |

| Runtime | Python (standard) | Bun (single binary) |

Different ecosystems, identical strategy: own every layer between the model and running, tested code. Make each layer deterministic enough that agents can operate autonomously through CI/CD without human intervention.

The IDE Isn’t Dead

These acquisitions don’t replace VSCode or JetBrains.

The IDE becomes a host environment. Multiple agents, each backed by their own vertically integrated toolchain, run inside your editor simultaneously. The Agent Client Protocol is already being designed as the universal interface between agents and IDEs.

VSCode’s value shifts from being the tool you code in to being the platform where agents execute on your behalf. The agents bring their own runtimes, their own linters, their own test runners. The execution stack travels with the agent, not the IDE.

What This Means for Engineers

The feedback loop is compressing. Your agent generates code, lints it, type-checks it, resolves dependencies, and runs tests. These acquisitions make each step fast enough and deterministic enough to run autonomously.

The engineering skills that compound in this world: constructing intent clearly enough that the agent’s autonomous loop produces the right result, building self-improving environments where agents get better with each iteration, and managing agents at scale — coordinating multiple autonomous loops across a codebase without them stepping on each other. That’s less about writing code and more about understanding your system’s architecture, constraints, and edge cases deeply.

The competition between AI companies has moved from model benchmarks to infrastructure ownership. The model is necessary but not sufficient. The company that controls the fastest, most deterministic path from prompt to running, tested code has the stickiest product.

References

- OpenAI acquires Astral (uv, ruff — 126M monthly downloads)

- OpenAI acquires Promptfoo (350K+ developers, 50+ vulnerability types)

- Anthropic acquires Bun ($1B Claude Code run-rate)

- Anthropic acquires Vercept

- Anthropic: Harness design for long-running application development (multi-agent plan-build-evaluate architecture)

- AI-generated code defect rates: Snyk, CodeRabbit

- “Agents took over VS Code in 2025” — Burke Holland, Microsoft

Stay in the loop

New posts delivered to your inbox. No spam, unsubscribe anytime.