Marc Andreessen on Why AI's Messiest Problems Are Its Biggest Opportunity

Key Takeaways

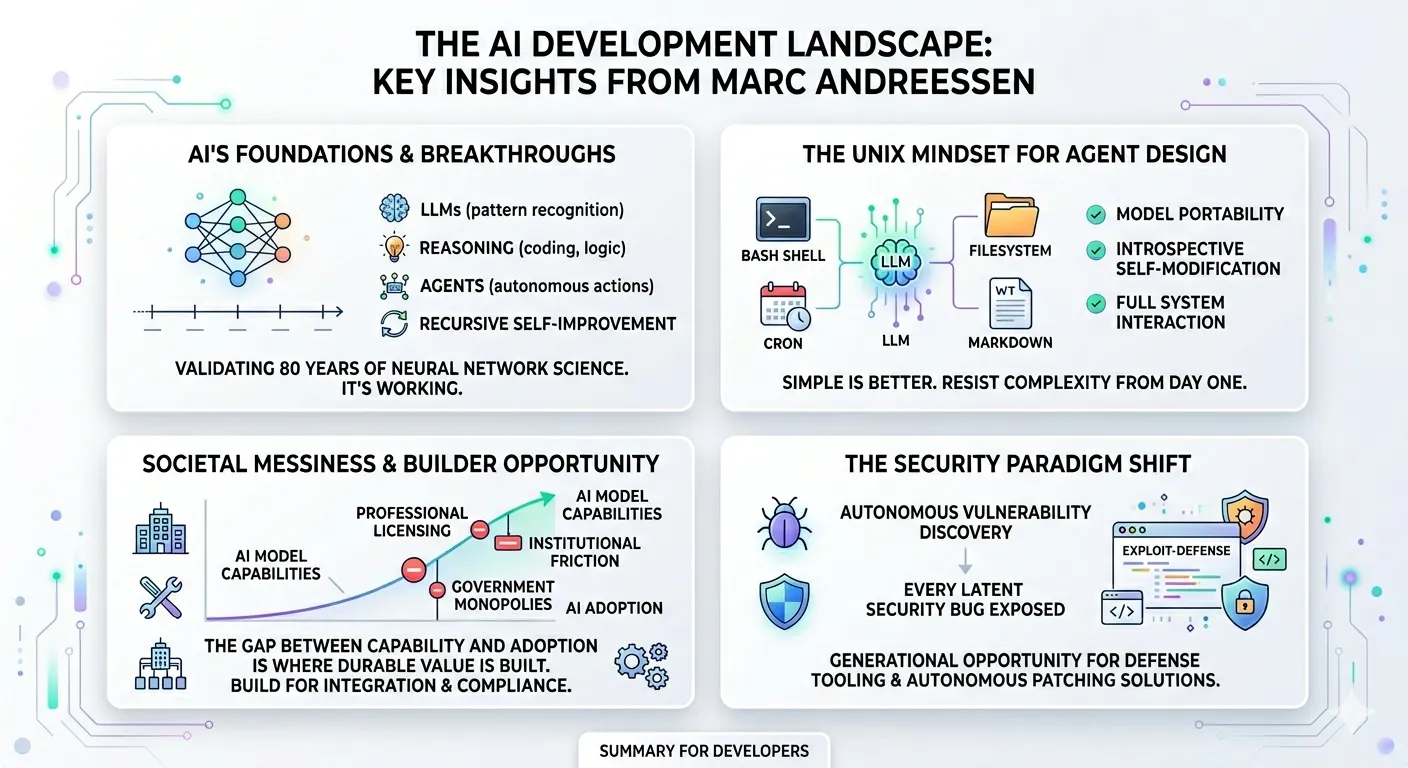

- AI is an 80-year overnight success, not a hype cycle. The current wave draws on foundational research dating to 1943. Four compounding breakthroughs — LLMs, reasoning, agents, and recursive self-improvement — validate that the underlying science was correct all along.

- Society’s messiness is the durable source of value for builders. Professional licensing, union contracts, government monopolies, and institutional inertia mean AI adoption will be slow and uneven — creating enormous surface area for companies that solve real-world integration.

- The Unix mindset is the correct agent architecture. LLM + Bash shell + filesystem + markdown + cron is the agent formula. Every component except the model already existed for decades — and that’s the point.

- The security landscape is about to undergo its most dramatic transformation. Claude Mythos Preview’s autonomous vulnerability discovery — finding more bugs than researchers found in their entire careers — validates the prediction that every latent security bug is about to be exposed.

- Proof of human is the missing internet protocol. Bots now pass the Turing test. Cryptographic biometric verification with selective disclosure isn’t optional — it’s infrastructure.

Marc Andreessen sat down with the Latent Space podcast [7] on April 3, 2026, for a wide-ranging conversation covering everything from the 1943 McCulloch-Pitts neural network paper to why his friend’s AI agent watches him sleep. Beneath the anecdotes is a coherent thesis that software engineers should internalize: the gap between what AI can do and what society will actually let it do is where the next decade of building happens.

This post distills the technical and strategic takeaways from the episode. Days after the podcast aired, Anthropic released Claude Mythos Preview — a model capable enough to discover zero-day vulnerabilities autonomously and score 93.9% on SWE-bench Verified [3]. Where Mythos concretely illustrates Marc’s predictions, particularly around security and compute constraints, we draw the connection.

The 80-Year Overnight Success

Marc’s core framing is that AI isn’t a sudden phenomenon. The neural network was first proposed in 1943 [1]. The 1955 Dartmouth conference predicted AGI within a 10-week summer session. Expert systems boomed and crashed in the 1980s. AlexNet hit in 2013. Transformers arrived in 2017. Then a strange four-year lull where companies like Google had internal chatbots but wouldn’t deploy them.

The point isn’t historical trivia. It’s structural: the scientists who worked on neural networks for decades were fundamentally correct about the architecture. Many never lived to see the payoff — John McCarthy taught AI at Stanford for 40 years and passed away before ChatGPT shipped. But the research compounded.

What’s different now, Marc argues, is that four distinct breakthroughs have arrived in rapid succession:

- Large language models — the ChatGPT moment (2022)

- Reasoning — O1 and R1 proving models can work in domains that matter: coding, medicine, law (2024–2025)

- Agents — OpenClaw demonstrating autonomous, self-extending AI systems (2025–2026)

- Recursive self-improvement — models improving their own training and scaffolding (2026)

Each breakthrough validates the previous one. Reasoning proved LLMs aren’t just pattern completion. Coding proved reasoning works in the real world. Agents proved coding systems can operate autonomously. And RSI proves agents can improve themselves.

Marc is blunt: “I think it would be essentially suicidal to bet that this is going to somehow disappoint.”

The “four most dangerous words in investing are ‘this time is different,’” he acknowledges. His counter: “What’s different? Now it’s working.”

The Unix Mindset for Agents

The most technically substantive part of the podcast is Marc’s analysis of Pi — the minimalist TypeScript agent framework created by Mario Zechner that powers OpenClaw — and why its architecture represents a fundamental conceptual breakthrough.

Marc’s formulation:

Agent = LLM + Bash shell + filesystem + markdown + cron

Every component except the language model has existed for decades. That’s not a weakness — it’s the point. The architecture inherits the full latent power of Unix:

Pi enforces this philosophy ruthlessly. The entire framework is ~4,000 lines of TypeScript with only four tools: read, write, edit, and bash [2]. The system prompt is under 1,000 tokens. No MCP. No specialized integrations. Everything routes through the shell.

Marc draws an explicit parallel to the transition from IBM’s monolithic OS/360 to the Unix philosophy of composable, chainable modules. OS/360 was a giant castle of software — unapproachable unless you were inside IBM. Unix said: give people a prompt, a shell, and discrete modules they can chain together. The operating system itself becomes a programming language.

The same pattern is playing out now. Complex agent frameworks with custom protocols are the OS/360 approach. Pi’s bash-first design is Unix.

Three properties matter for engineers:

-

Model portability. Your agent’s state lives in files, not in model weights. Swap Claude for GPT — the agent keeps its memories and capabilities. Pi’s

.jsonlsession format normalizes thinking traces across providers [2]. -

Self-modification. The agent has full introspective access to its own code. Tell it “add a new capability to yourself” and it reads its own files, writes new functionality, and integrates it. Marc: “No widely deployed software system in history has had that level of self-modification.”

-

Computer use for free. If the agent has shell access, it already controls your computer. Browser automation, file management, network discovery — these aren’t features to build. They’re consequences of the architecture.

The key insight for builders is that the Unix mindset isn’t just an architecture — it’s a discipline. Start with the simplest possible agent: a model, a shell, and a filesystem. Resist the urge to bolt on specialized tools, custom protocols, or elaborate orchestration frameworks from day one. Let real-world usage reveal what’s actually missing before you add complexity. When you do add layers — error recovery, observability, multi-agent coordination — build them around the simple core rather than replacing it. The agent that does four things reliably will always beat the agent that does forty things unpredictably. Simpler is better, and the burden of proof should always be on the added complexity to justify its existence.

Society Is Messy — And That’s Where You Build

This is the section that matters most for engineers thinking about what to build.

Marc parts ways with what he calls the “AI purists” — lab researchers who can’t understand why society doesn’t just adopt their recommendations. His argument:

“There’s no single society. It’s 8 billion people. They all have a voice and they all have a vote. Human reality is just really complicated and messy.”

He provides concrete examples of institutional friction that no amount of model capability will bypass:

- 900 hours of professional certification to become a hairdresser in California

- ~35% of the economy requires some form of professional licensing

- 25,000 dock workers blocked port automation — with 25,000 more drawing full paychecks at home from prior union agreements

- K-12 education is a government monopoly. Teachers are 100% opposed to AI integration. Marc’s assessment: “There’s no chance.”

- Federal agencies with COVID-era collective bargaining agreements requiring one day in office per month — entire buildings empty 58 out of 60 days

Marc’s conclusion is sharp: “Both the AI utopians and the AI doomers are far too optimistic. They believe that because the technology makes something possible, 8 billion people are going to change how they behave.”

For software engineers, this reframes the opportunity. The question isn’t “what can AI do?” — that’s increasingly everything. The question is “what does the messy process of getting AI into real-world institutions actually require?”

The answer: a lot of software. Integration layers. Compliance wrappers. Domain-specific adapters. Workflow tools that bridge the gap between what an agent can do in a sandbox and what a regulated industry will accept.

Consider Anthropic’s Claude Mythos Preview, released just days after this podcast. Mythos achieves 93.9% on SWE-bench Verified, discovers zero-day vulnerabilities autonomously, and demonstrates agentic capabilities so advanced that Anthropic restricted its release to 12 founding partners through Project Glasswing [5] — not because of institutional friction, but because they consider the safety risk too high for broad deployment [4]. That’s a different constraint than the ones Marc describes. But here’s what matters for builders: even if Mythos were broadly available tomorrow, it wouldn’t change the 900-hour hairdresser licensing requirement. It wouldn’t renegotiate the dock workers’ contracts. It wouldn’t convince a single teachers’ union to welcome AI into classrooms. Model capabilities keep compounding. The societal friction Marc describes doesn’t budge. That gap — between what the technology can do and what institutions will allow — is what makes the integration layer a durable place to build.

The Security Apocalypse (and Opportunity)

Marc makes a prediction that deserves its own section:

“Every single latent security bug is about to be exposed. We’re set up for the computer security apocalypse for a while, but on the other side of it, now we have coding agents that can go in and actually fix all the security bugs.”

Claude Mythos Preview’s system card provides the most concrete evidence for both sides of this prediction [4]:

The exposure side:

- On Firefox exploit development, Opus 4.6 produced 2 successful exploits. Mythos succeeded 181 times plus register control on 29 more

- On the OSS-Fuzz corpus (7,000 entry points), Mythos found 595 crashes including tier-5 control flow hijacks on 10 fully-patched targets

- Nicholas Carlini, a security researcher involved in testing: “More bugs in the last couple of weeks than I found in the rest of my life combined” [4]

- A 27-year-old OpenBSD bug was discovered for under $50 in API costs

The defense side:

- 89% of Mythos’s vulnerability reports matched human severity assessments exactly

- Validation accuracy was 98% within one severity level

- The model can autonomously construct multi-stage exploit chains — meaning it can autonomously validate whether patches actually resolve vulnerabilities

For software engineers, this means two things. First, automated security auditing at scale is now real — not theoretical. Second, the window between “attackers have autonomous exploit tools” and “defenders have autonomous patching tools” is the most dangerous period in computing history. The companies that close that gap fastest will define the next generation of security infrastructure.

Marc’s “society is messy” framework applies here too. The technology for autonomous security auditing exists. The messy process of deploying it across millions of codebases, integrating with existing CI/CD pipelines, navigating liability, and building enterprise trust — that’s where companies get built.

Supply Constraints Are Sandbagging the Models

Marc makes a counterintuitive argument: the models we use today are inferior versions of what we’d have without supply constraints.

If GPUs were 10x cheaper and more plentiful, labs would allocate more compute to training and build better models. Every publicly available model is quantized because the labs keep the full-precision versions. We’re getting the sandbag version of the technology.

He projects chronic supply shortage for 3–4 years, with the entire supply chain sold out. The implications:

- Inference costs may rise despite algorithmic improvements, because demand growth outpaces supply expansion

- Agent workloads compound the problem. Agents don’t just need GPUs for inference — they need CPUs and memory for tool execution, creating bottlenecks across the entire chip ecosystem

- Edge inference becomes critical. Apple Silicon innovations and the recurring pattern where “the big model will never run on a PC, and six months later, it runs on a PC”

Mythos Preview’s pricing illustrates the constraint: $25 / $125 per million input/output tokens — 5x Opus 4.6’s pricing, available only through the restricted Glasswing program [6]. Marc’s friends are already paying $1,000/day for Claude tokens running OpenClaw. At Mythos pricing, latent demand of $5,000–$10,000/day per fully deployed personal agent isn’t unrealistic.

There’s a silver lining for the current chip economics. Marc highlights a phenomenon that’s “literally never happened before”: older NVIDIA GPUs are becoming more valuable over time. The pace of software improvement is faster than the hardware depreciation cycle, meaning a three-year-old inference chip generates more revenue today than when it was new.

This supply-demand gap is bullish for open-source AI, edge inference, and model efficiency research. The Chinese open-source labs (DeepSeek, Qwen, and others) are contributing enormously — not just as free software, but as free education. DeepSeek’s R1 paper taught the entire world how to do reasoning. Three months later, every model had it.

Proof of Human: The Missing Protocol

Marc identifies two asymmetric threats — one virtual, one physical — that share the same structure:

- Virtual: Bots now pass the Turing test. You can’t detect them. You need proof of human — not proof of “not bot.”

- Physical: Cheap attack drones create an analogous asymmetry. Cheap to field, expensive to defend against.

His proposed solution for the virtual side: cryptographic biometric verification with selective disclosure. Prove you’re human without revealing your name. Prove your age without revealing your birthday. Prove creditworthiness without revealing your financial history.

He points to the World project as architecturally correct: biological validation as the root of trust, then cryptographic proof layered on top.

This matters for engineers building agent-to-agent systems. HTTP 402 (Payment Required) has been unused since 1999, and agent commerce will need both payment rails and identity verification. Marc frames crypto stablecoins as AI’s killer app — the convergence of AI and crypto that many have predicted but none have delivered.

What This Means for Software Engineers

Marc’s thesis distills to a simple framework: the technology is real, the adoption will be messy, and the mess is where you build.

Three concrete implications:

-

Build for the integration layer, not the model layer. If you’re building something that works only because the current model can’t do X, the next model will eat you. If you’re building something that helps a regulated industry actually adopt AI — compliance, workflow integration, domain-specific trust — that’s durable value.

-

Learn the agent architecture. LLM + shell + filesystem + markdown + cron is converging as the industry standard. Pi’s four-tool constraint demonstrates that fewer general tools beat many specialized ones when the model is capable enough. Understanding how to build, extend, and secure agents in this paradigm is the highest-leverage skill investment right now.

-

Treat security as a first-class product category. The autonomous vulnerability discovery capabilities demonstrated by Mythos Preview will get cheaper and more accessible. The window between “attackers can do this” and “defenders can do this at scale” is a generational opportunity for security tooling companies.

Marc opened the podcast by saying that if he were 18, AI is what he’d spend all his time on. Having watched 80 years of research compound into the breakthroughs we’re living through, it’s hard to disagree. The technology is real. The opportunity is in the mess.

References

[1] Warren S. McCulloch, Walter Pitts. “A Logical Calculus of the Ideas Immanent in Nervous Activity.” Bulletin of Mathematical Biophysics, 5:115–133, 1943. Paper

[2] Armin Ronacher. “Pi: A Minimal Coding Agent.” January 2026. Blog Post | Shivam Agarwal. “Agentic AI Pi: Anatomy of a Minimal Coding Agent Powering OpenClaw.” Analysis

[3] NxCode. “Claude Mythos Benchmarks: 93% SWE-Bench, Every Record Broken.” April 2026. Article

[4] Anthropic. “Claude Mythos Preview.” April 2026. System Card

[5] Anthropic. “Project Glasswing.” April 2026. Announcement

[6] WaveSpeedAI. “Claude Mythos API & Pricing.” April 2026. Article

[7] Latent Space Podcast. “Marc Andreessen introspects on The Death of the Browser, Pi + OpenClaw, and Why ‘This Time Is Different.’” April 3, 2026. Episode

Stay in the loop

New posts delivered to your inbox. No spam, unsubscribe anytime.